Large Language Models are probabilistic. They predict the next most likely word. When you ask them to “critique,” you populate their context window with high quality reasoning and negative constraints (eg. what not to do). The final generation is then statistically more likely to follow that higher standard because the logic is now part of the immediate conversation history. Try this: Draft: Ask for your content as usual. “Write a cold email to a potential client about our new web design services.” Critique: Dont just ask for a better version. Ask the AI to analyze its draft against specific criteria.…

-

-

This code snippet demonstrates a sample code which uses Azure OpenAI endpoint to execute an LLM call. # pip install agent-framework python-dotenv import asyncio import os from dotenv import load_dotenv from agent_framework.azure import AzureOpenAIChatClient # Load environment variables from .env file load_dotenv() api_key = os.getenv("AZURE_OPENAI_API_KEY") deployment_name = os.getenv("AZURE_OPENAI_DEPLOYMENT") endpoint = os.getenv("AZURE_OPENAI_ENDPOINT") api_version = os.getenv("AZURE_OPENAI_API_VERSION") agent = AzureOpenAIChatClient( endpoint=endpoint, api_key = api_key, deployment_name=deployment_name, api_version=api_version ).create_agent( instructions="You are a poet", name="Poet" ) async def main(): result = await agent.run("Write a two liner poem on nature") print(result.text) asyncio.run(main()) .env file sample AZURE_OPENAI_API_KEY={paste your api key} AZURE_OPENAI_ENDPOINT=https://{your enpoint}.openai.azure.com/ AZURE_OPENAI_DEPLOYMENT=o4-mini AZURE_OPENAI_API_VERSION=2024-12-01-preview

-

Most of us are in a transition phase from AI prototypes to production systems. Many frameworks that appeared impressive on demo servers have failed badly in real production environments. It is important to consider all architectural pillars and aspects during the design stage. Delaying these decisions only adds time and cost later. Agentic/AI consumes tokens, and token usage directly translates to monetary cost. This course offers a clear explanation of AI/agent caching techniques and shows how to evaluate the effectiveness of different caching strategies. Attend the course here: https://www.deeplearning.ai/short-courses/semantic-caching-for-ai-agents/

-

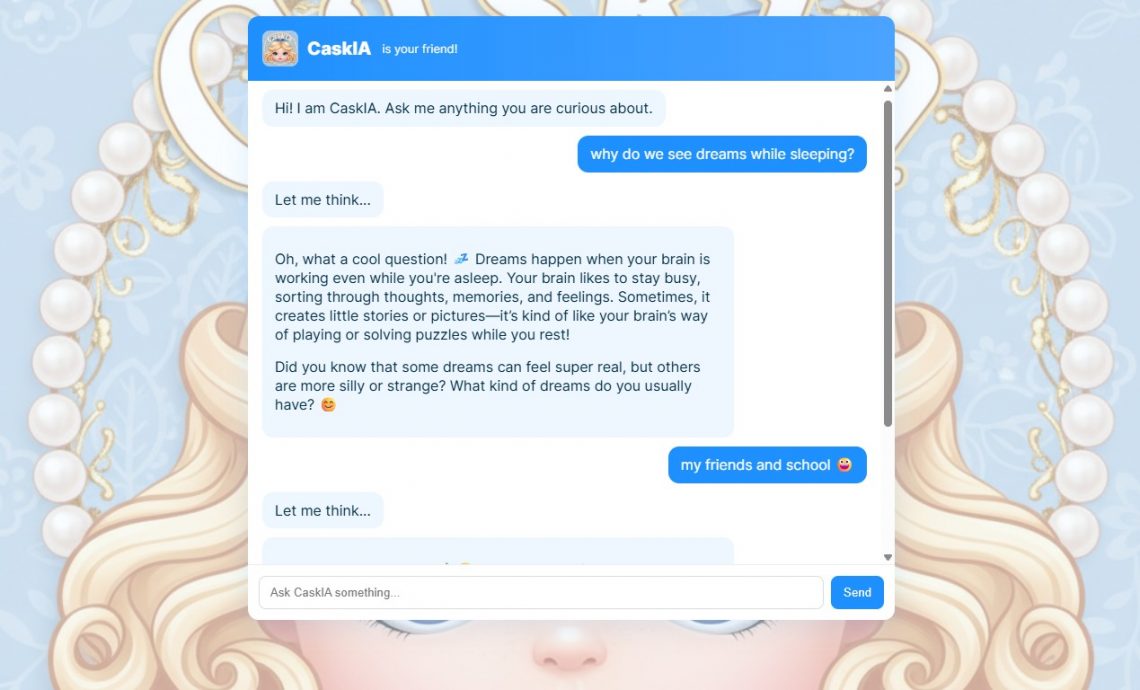

My 3rd-grade kid has been using modern chat assistants for his hobbies a lot lately. Today he said he wanted his own AI chat bot. He tried building one with GitHub Copilot, but the chain-of-thought prompting pushed him into creating a search engine style chat since he did not realize he needed to connect an actual LLM to make it intelligent. I stepped in and helped him build a simple app using the free version of GitHub Copilot, and also explained how it works. It took about 30 minutes to put everything together. I am sharing it publicly so others…

-

Registration: https://www.meetup.com/kmug-meetup/events/311516507/ Agenda

-

I see that many people are still confused by the term Copilot in the Microsoft context. Are Microsoft Copilot, M365 Copilot, and Microsoft Copilot Studio different from one another? If so, how are they different?